Ignore, Trust, or Negotiate: Understanding Clinician Acceptance of AI-Based Treatment Recommendations in Health Care

Published at

CHI

| Hamburg, Germany

2023

Abstract

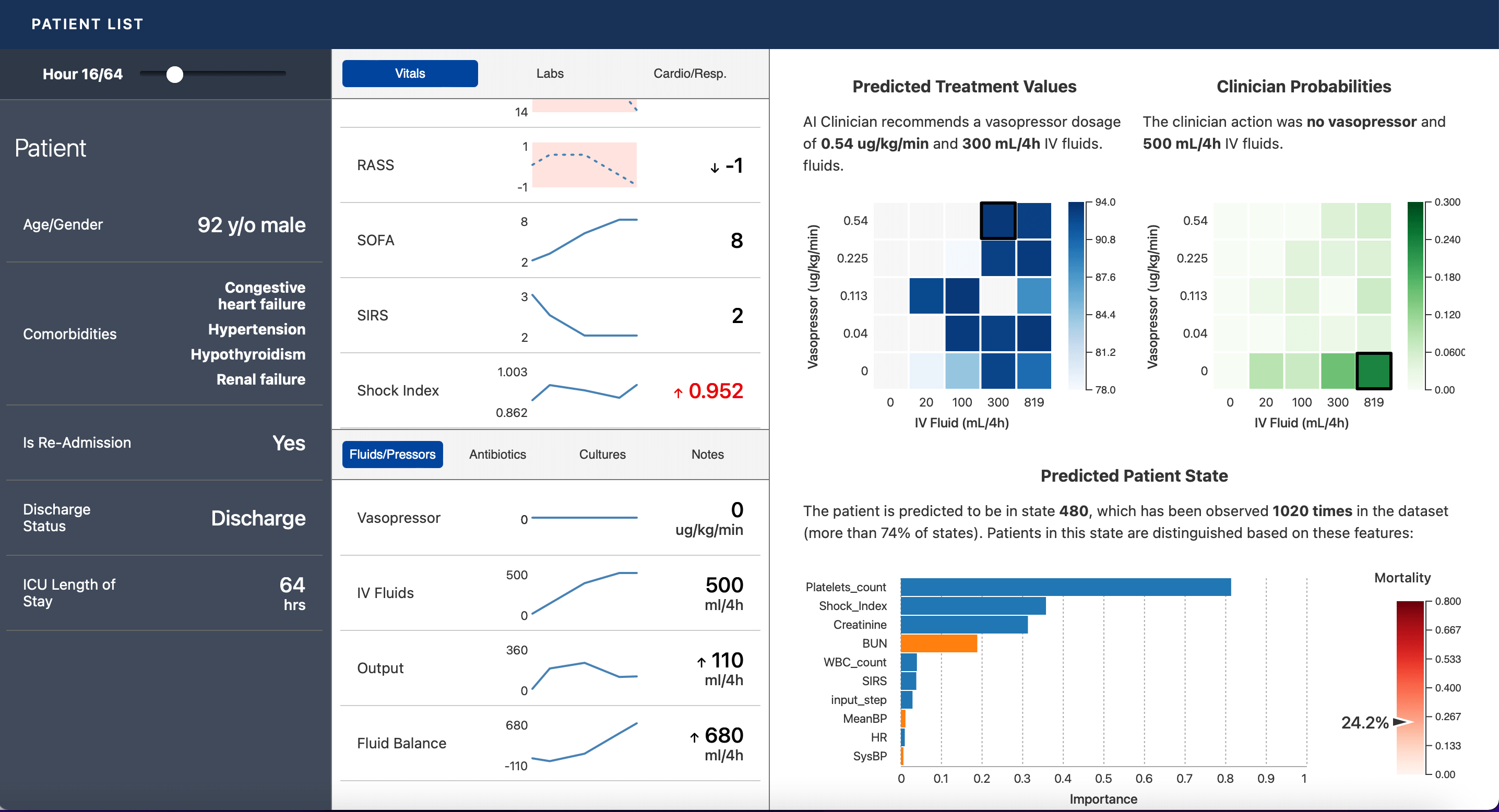

Artificial intelligence (AI) in healthcare has the potential to improve patient

outcomes, but clinician acceptance remains a critical barrier. We developed a

novel decision support interface that provides interpretable treatment

recommendations for sepsis, a life-threatening condition in which decisional

uncertainty is common, treatment practices vary widely, and poor outcomes can

occur even with optimal decisions. This system formed the basis of a

mixed-methods study in which 24 intensive care clinicians made AI-assisted

decisions on real patient cases. We found that explanations generally increased

confidence in the AI, but concordance with specific recommendations varied

beyond the binary acceptance or rejection described in prior work. Although

clinicians sometimes ignored or trusted the AI, they also often prioritized

aspects of the recommendations to follow, reject, or delay in a process we term

"negotiation." These results reveal novel barriers to adoption of

treatment-focused AI tools and suggest ways to better support differing

clinician perspectives.